Results from mobile usability testing

Last week I conducted some guerrilla usability testing on a mobile device in the University’s Library. The main purpose of the test was to find out whether the new digital prospectus pages can be used on mobile devices. This post provides an overview of the users’ experiences and what digicomms needs to do next with the pages to ensure they’re meeting user needs.

Who took the test?

In total, seven participants were tested, all were undergraduates and some were international students. The courses they studied were varied and ranged from International Relations, to Mathematics and English.

General feedback from participants was that their experience of the website is usually positive. Interestingly, as with the initial usability test, we found that participants highlighted the importance of awards and accreditations for Schools and the University over course information when exploring courses as a prospective student.

Some participants stated that they found the differences between iSaint/MySaint and MMS confusing. One explicitly stated: “there’s lots of different websites to use that all do the same thing”.

Highlights from test

In general, user experience with new subject and undergraduate pages was improved when compared with trying to find the same information on the existing School webpages.

However, one major drawback from the new pages that was evident on mobile, but not on desktop testing, was that students did not use the navigation bar at the top of undergraduate pages and on subject pages.

This, combined with students’ general unwillingness to scroll beyond 50% of the page resulted in students remarking how long the pages were, and how there was a lot of text. One participant stated that it probably wasn’t the case on the desktop, but on a phone extraneous text can seriously impact user experience.

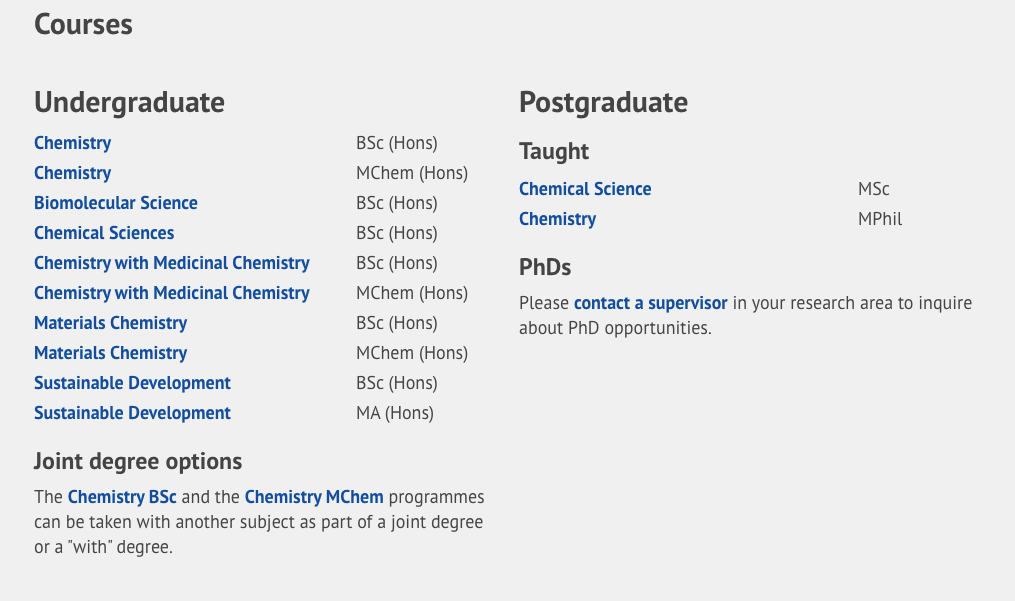

Despite digicomms’ work rewording the joint degree section on the subject pages (an outcome of our last usability testing), joint degree information was still not easy to find. However, I feel that this isn’t due to the wording on the subject pages, as this time the text was noticed. Instead, this issue is connected to students not using the navigation or scrolling far enough down the page (joint degree information is nearer the bottom than the top of the page).

Finally, one other major factor that impacted students finding joint degree information was the fact that the links within the joint degree information text on the subject pages didn’t link directly to the joint degree sections. Instead, they were just linking to the courses that could be taken as a joint degree.

Actions

Going forward, the digicomms team are going to further reassess how joint degree information is presented to prospective students. This will include linking directly to the joint degree section within individual undergraduate pages.

We are also considering creating a more general joint degree information page within Study. This will ensure that students searching for something like “joint Honours degrees” on Google or within the website will be taken directly to relevant information.

This round of testing also highlighted areas of improvement surrounding the test itself. For one, we realised that students don’t want free donuts, so next time we’ll need an alternative incentive. More seriously however is the need to clearly explain what a prospective student is, as one student began a task thinking and acting as a current student.

In addition, for the next round of testing, to ensure participants spend more time testing the area of the website we need them to, they should be placed on a specific webpage, rather than just the homepage or Google. In this test, participants started on the University’s homepage and this led students to complete tasks on School sites and using the Study pages, whereas we wanted to see how the new digital prospectus pages held up.

While allowing the student to ‘organically’ find information from a more general source can be illustrative, sometimes guiding them a little bit of the way can help produce more refined and useful results.